Cole: I always gave into the concept that if you recorded at higher sample rates then it sounds better. Some people I considered to be good engineers actually said if you can record 96khz then do it. If you don’t mind me asking how did you come to the conclusion of keeping the sample rate of your sessions at 44.1khz instead of using higher sample rates like 48, 88.2, 96khz? From what I know, you can’t record both at 48khz and 44.1 at the same time so it would be a bit difficult to a-b the two right?

Its kinda difficult to explain and also really depends on what kind of project you are working on. Certainly their are situations that warrant recording at a higher sample rate. But, 90%-95% of the time when recording, especially in genres where live instrumentation isn’t really used or needed, it is better to remain at a sample rate of 44.1khz. To help explain, first, i think it’s best to fully understand what digital audio really is.

So, essentially you have to think of digital audio as the summation of a lot of math equations, those equations coming from the process of converting an analog signal to a digital signal or what is commonly known as A/D. The architecture of those equations are determined by both sample rate and bit depth, which i’ll explain shortly. The final sum of those equations (which we call an audio file) is never quite a perfect answer, its always some kind of number with a lot of decimal points to it.

What makes the math imperfect is the fact that the sum is usually a number that is a rounded result from all the equations occurring in the process to convert an analog signal to a digital signal. The accuracy of the mathematics occurring is determined by the quality of the A/D process done by a device called the A/D converter, which you would also know as your Audio Interface. Depending on the actual quality of your A/D converter, the amount of decimal points that the sum will assume can either be short or an extremely large value. Top of the line A/D converters, the ones that cost as much $12 grand and higher, round out the sum of these math equations to a trillion or more decimal places, which means the sum is going to be a lot lot more accurate and detailed. Low end A/D’s round out these equations to a few hundred or thousand decimal places, so the sum is just not going to be as accurate and detailed.

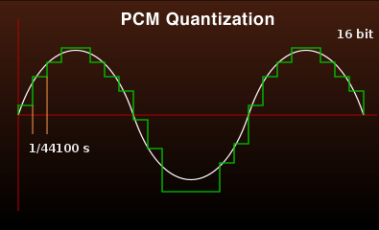

So what exactly is ‘the sample rate’? In a comparative ‘simple’ explanation, it essentially is a consecutive series of snap shots over a specific duration of time like a video or motion picture. But we’re not talking about video, we’re talking about audio. What defines the sample rate is how many of these snapshots of audio occur in the duration of 1 second as well as how consistent the interval between each snapshot is. So what 48 khz really means when you break that number down is that their is 48,000 snapshots of audio in 1 second. 44.1 khz means that their is 44,100 snap shots of audio in 1 second.

It’s also helpful to understand what ‘Bit Depth’ is as well in this conversation. Once again, to make a comparative ‘simple’ explanation, ‘Bit Depth’ represents how detailed each one of these snapshots are, kinda of like the amount of colors in a photograph. Black and white photos represent the lowest bit depth, and photos of many millions of color combinations represent the highest bit depths. Once again, since we’re not actually talking about photos, the details we are talking about in each snapshot of audio is decibel level steps in the frequency range of human hearing, which is between 20hz-18,00khz.

16 Bit audio has 65,536 steps while 24 Bit audio has 16,777,216 steps. Essentially, 24 Bit audio has 256 times the number of potential amplitude steps as 16 bit audio.

Now that we roughly know what digital audio, sample rate and bit depth are in terms of how they are defined, we can go back to your original question about why i mostly choose to record and work in a 44.1khz, 24 bit format as opposed to a higher sample rate and bit depth in our DAW.

You have to think of the A/D converter as essentially a sonic camera. You can kind of compare recording digital audio through different quality A/D converters to animating photographs from different kinds cameras. A really top end camera is going to take a high detailed snapshot, and obviously a cheaper consumer camera is going to take slightly less detailed snapshot. If you were to take 48000 snapshots in a row super quickly and animate those snapshots, its pretty clear that the animation of the snapshots from the top end camera is going to look way better than from the animation of the snapshots taken from the consumer camera. The same would apply if you were to take 44100 snapshots.

So now in the conversion process of converting audio from 48khz to 44.1khz, essentially we have to remove 3900 snapshots of audio to get from 48 kHz to 44.1 khz. But which snapshots are we going to remove? And when we remove those snapshots, what happens to all that extra space that was now created. The interval between each snapshot is not going to be consistent anymore. It would go something like, snapshot snapshot, missing snapshot, snapshot snapshot, missing snapshot instead of just snapshot after snapshot after snapshot. Because we had to remove those 3900 snapshots, if you were to animate the remaining 44100 snapshots back what you would see back is going to be less smooth and consistent. Totally not representative to what the animation of 48000 snapshots would look like because the intervals between snapshots wouldn’t be the same. So essentially converting audio down from 48khz to 44.1 means you are removing information from the 48khz file to make it a 44.1khz file. Anytime your removing information from an audio file like that, the end result is not going to be the same as the original audio file, even if its only the slightest of differences.

So thats why i keep everything at 44.1, so i’m not losing any tiny pieces of information from the audio file in the conversion process. Hope this is somewhat understandable.

Comments.

Currently there are no comments related to this article. You have a special honor to be the first commenter. Thanks!